Directory of Leading Security Awareness Training Platforms

Security awareness training isn’t a “checkbox LMS.” It’s the control that decides whether your zero trust program actually holds up when attackers target humans instead of endpoints. If your training is boring, generic, unmeasured, and disconnected from real attack paths, you’ll keep seeing the same incidents: credential theft, fraudulent approvals, and “perfectly normal” actions that quietly become breaches. This directory breaks down the leading security awareness training platforms (SAT) by use case, the capabilities that separate top-tier programs from shelfware, and the evaluation criteria that procurement teams miss until it’s too late.

1) Why security awareness training platforms matter more than ever

Modern attackers don’t “hack” your firewall first—they hack attention, trust, and workflow. The fastest compromises are built on predictable human patterns: approving a push prompt, granting OAuth access, paying an “urgent invoice,” or reusing credentials across services. Those patterns fuel the exact threat arcs you’re already tracking in your 2030 threat predictions and the coming wave of AI-powered cyberattacks.

A serious SAT platform is not just “videos + quizzes.” It’s a behavior-change engine that:

Reduces identity-driven incidents (phishing → credential theft → lateral movement) by aligning with your access control model and MFA posture.

Strengthens incident detection by teaching employees what to report and how fast, supporting your incident response plan.

Cuts dwell time by improving signal quality for your SOC and your SIEM program.

Hardens remote teams against consent-grant abuse, business email compromise (BEC), and approval fraud—patterns that also show up in deepfake threat planning.

Here’s the pain point most teams won’t say out loud: your current training might be increasing risk if it creates “compliance fatigue” without teaching real decision-making under pressure. If people learn to click “Next” faster, attackers win.

2) Directory of leading security awareness training platforms

A “leading” platform is the one that matches your highest-risk workflows—not the one with the most videos. If your organization gets hit by OAuth consent abuse, generic phishing modules won’t save you. If your biggest loss event is payment fraud, training that ignores finance approvals is theater. Use the directory table as a starting point, then map each option to your most likely breach paths: identity compromise, ransomware entry, vendor abuse, and approval fraud—the same threat families called out in future threat evolution research and ransomware response planning.

The platform “types” you actually need to compare

1) Email-attack programs (phishing/BEC-first).

If your org lives in email, your SAT platform should feel like an extension of your email controls: consistent language, consistent alerts, and a reporting workflow that feeds your SIEM rather than spamming it.

2) Identity-first programs (MFA fatigue, session theft, token replay).

Training must reflect how attackers steal sessions, not just passwords—especially with modern tactics highlighted across AI-driven security shifts and long-term future skills requirements.

3) Compliance + policy programs (audit survivability).

These platforms do best when paired with real decision practice and practical controls like DLP awareness, secure sharing habits, and escalation playbooks.

4) Behavior coaching programs (micro-learning + nudges).

These win where employees are resistant, remote, overloaded, or cynical. The goal is not “pass the quiz.” The goal is “pause before approving something irreversible.”

What “leading” looks like during real incidents

When an employee reports a suspicious email, the system should route it fast and teach “what good reporting contains,” aligning to your IR plan.

When an employee clicks, the platform should coach the specific mistake (authority pressure, urgency, invoice bait, fake login), not just shame them—because shame creates under-reporting.

When leadership asks for ROI, you should show risk reduction, not content completion—linking improvements to reduced identity incidents and reduced time-to-report, the same operational logic used in security audit best practices.

3) How to choose a platform without getting trapped in “checkbox training”

Most buyers compare SAT tools like they’re comparing streaming services: number of videos, number of templates, number of languages. That’s how you end up with a “successful rollout” that fails during the first real fraud attempt.

Instead, evaluate with a security-operations lens:

1) Start with attack paths, not features

Write down your top five “human-failure breach paths”:

Credential theft leading to cloud compromise (tie it to your cloud security roadmap and future cloud trends)

Payment redirection / invoice fraud (finance workflow)

Vendor access abuse and supply chain compromise (procurement + IT)

OAuth consent grants / token theft (SaaS identity)

Deepfake-enabled executive impersonation (approvals + money movement), aligned to deepfake threat prep

Then ask: Can the platform train decision-making specifically inside these paths? If it can’t, it’s not “leading” for you.

2) Demand measurement that changes actions

Completion rates are vanity. You want metrics that force operational improvements:

Time-to-report (median and 90th percentile)

Report quality (false positive rate vs useful reports)

Repeat-failure reduction (does the same user fail repeatedly?)

Role risk (finance/HR/IT/exec risk compared to baseline)

Simulation realism tolerance (does deliverability mimic real mail flow?)

If the platform can’t produce these signals cleanly, your program can’t mature—and your CTI workflows won’t translate into user behavior change.

3) Don’t ignore integration friction

The quiet killer of SAT programs is integration and ownership ambiguity:

Who owns SSO and provisioning?

Who approves simulation domains and mail delivery configuration?

Where do reports go—SOC queue, GRC dashboard, HR compliance?

Is the “report phish” button tied to actual triage?

Your SAT tool should reduce noise, not add it—especially if you already run high-volume alerting through SIEM pipelines.

4) Implementation playbook: how to roll out SAT so it changes behavior

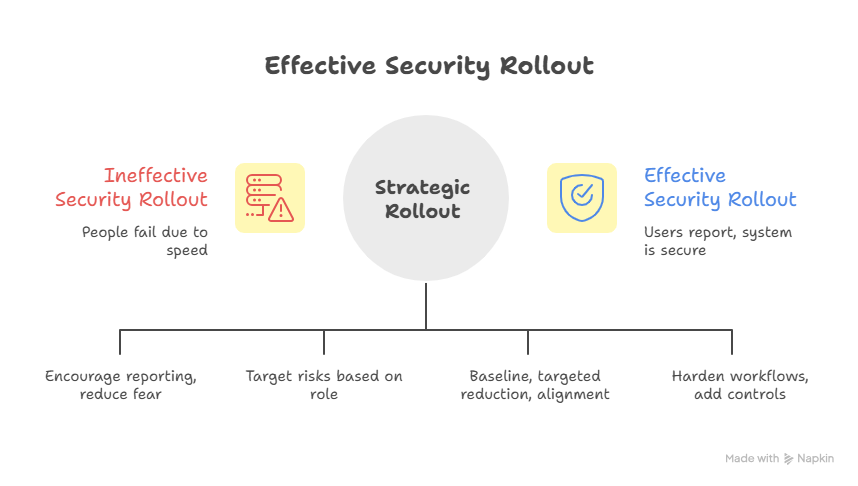

Buying a platform is easy. Changing behavior is hard—because people don’t fail due to ignorance. They fail due to speed, pressure, ambiguity, and authority. Your rollout must be designed for those conditions, not for a calm classroom.

Step 1: Build a “no-blame, high-accountability” pact

If users think simulations are traps, they will hide mistakes. Hidden mistakes become breaches. Your message should be:

Reporting fast is praised.

Mistakes are learning opportunities.

Repeated risky behavior triggers coaching—not humiliation.

This supports higher-quality reporting and aligns to your incident response execution goals.

Step 2: Segment by role and workflow risk

Role-based targeting is where “leading platforms” separate themselves:

Finance: payment fraud, vendor change requests, approval verification steps.

HR: payroll diversion, employee data requests, “confidential doc” lures.

IT/Helpdesk: reset scams, MFA fatigue, social engineering.

Executives: deepfake voice/video, wire transfer pressure, “board emergency” scenarios.

Tie this directly to your organization’s likely threat families described in future threat forecasting and 2030 predictions.

Step 3: Launch with a 30–60–90 plan

Days 1–30: Baseline + trust-building

Enable SSO/provisioning cleanly.

Run a light simulation to measure baseline (don’t go “hard mode” first).

Teach reporting behavior and exactly what happens after they report (this reduces fear).

Days 31–60: Targeted risk reduction

Introduce role-based modules and simulations.

Add “micro-coaching” immediately after failures (best platforms do this well).

Start leadership reporting: time-to-report, repeat-failure reduction.

Days 61–90: Operational alignment

Connect reporting workflows to SOC triage where feasible.

Build “just-in-time” training triggered by current threats from CTI operations.

Formalize policies around identity, approval verification, and data handling—reinforced by training and aligned to security audits best practices.

Step 4: Fix the system, not just the user

If your users keep failing the same way, it’s often because the system encourages unsafe speed:

Approvals lack verification steps.

Vendor change requests are handled over email without controls.

MFA prompts are too frequent (fatigue is predictable).

Password resets are easy to socially engineer.

Training should reveal where the workflow is brittle—then your controls team hardens it using principles from access control models and broader framework alignment.

5) Metrics and reporting that prove SAT is reducing real risk

If you can’t prove risk reduction, your program becomes budget bait during cuts. “People completed training” will not save it. What saves it is a defensible story: we measurably reduced the probability of high-impact events.

The scorecard that executives actually respect

Exposure score (before/after)

Measure failure rates on simulations that mirror your real threat exposure: credential lures, fake approvals, vendor fraud.Time-to-report

Median is important, but track the 90th percentile: the slowest reporters are often your highest-risk users due to workload and role pressure.Repeat-failure concentration

Most organizations have a small group carrying a large portion of risk. Leading platforms help you identify this without making it punitive.Role risk deltas

If finance failure rates drop and vendor fraud reporting rises, you have a clear ROI narrative.Incident correlation

Track whether improved reporting reduces actual incident severity (fewer compromised accounts, faster containment). Connect this to your IRP outcomes and, where possible, SOC measures in your SIEM.

Common reporting lies that ruin programs

“Click rate went down, so risk went down.”

Not necessarily. Attackers adapt. Also, users may be learning to avoid obvious tests rather than real threats.“We trained everyone, so we’re compliant.”

Compliance without behavior change is a breach waiting to happen—especially in identity-driven attacks highlighted in future cybersecurity compliance trends.“Our platform has AI, so it’s better.”

AI features matter only if they improve measurement, personalization, and operational speed—otherwise it’s marketing.

6) FAQs: Security awareness training platforms (SAT) — high-impact answers

-

They buy for content volume instead of risk alignment. A smaller library that trains real approval workflows, identity threats, and reporting behavior will outperform a massive library that people speed-run. Pair platform choice with your threat model from top threat predictions and operational reality from your incident response plan.

-

Run simulations as a calibrated measurement tool, not as a punishment cycle. Start lighter, then increase realism as reporting improves. Use role-based frequency (finance/HR more targeted) and tie it to operational metrics (time-to-report, repeat failures). If your users feel harassed, reporting will drop—hurting SOC outcomes and your SIEM signal quality.

-

Security should own risk outcomes; HR can support training logistics; compliance can validate audit artifacts. The clean model: security defines scenarios and metrics, HR supports adoption, compliance confirms evidence. This aligns with security audits processes while keeping the program operational.

-

Identity-first content (MFA fatigue, OAuth consent, session theft), strong reporting workflows, and micro-learning that fits asynchronous schedules. Remote teams are disproportionately hit by workflow ambiguity and approval pressure, the same conditions that accelerate AI-powered cyberattacks.

-

Make it measurable and connected to real controls: reporting feeds triage, repeat failures trigger coaching, and simulations reflect current threats derived from CTI collection. Then fix brittle workflows using principles from access control and framework alignment.

-

Reduced probability and impact of common high-cost events: fewer compromised accounts, fewer fraudulent approvals, faster containment due to better reporting, and lower incident handling load. Tie it to operational outcomes that leadership already values: downtime avoided, fraud prevented, and response efficiency, aligned to ransomware response priorities.

-

Yes—because attackers route around controls through people. Controls reduce exposure, but humans still approve access, share data, and respond to pressure. SAT closes the gap between policy and reality, and it complements your future-facing posture in zero trust and evolving threat defense.