Annual Report on Insider Threats: Identification & Prevention (2026-2027 Original Data)

Insider threat programs fail when companies keep treating them like a narrow HR problem or a narrow SOC problem. They are neither. The most expensive insider incidents now sit at the intersection of identity, cloud access, human behavior, contractor sprawl, weak governance, and delayed response. Public benchmarks still show why this matters: IBM’s 2024 breach report found malicious insider breaches were the costliest initial attack vector at $4.99 million per breach, while Ponemon’s 2025 insider-risk research said the average annualized cost of insider risk rose from $15.4 million in 2022 to $17.4 million.

This report combines those public signals with an original ACSMI 2026-2027 planning model focused on how security teams should identify, prioritize, and prevent insider-driven loss before it becomes regulatory pain, board-level embarrassment, or an operational shutdown. That is the real issue. Insider risk is rarely just “bad employee behavior.” It is usually weak visibility plus excessive access plus delayed escalation. Teams already studying future cybersecurity compliance shifts, SIEM strategy, data loss prevention strategies, and incident response planning are already looking at the right puzzle pieces. They just need them connected correctly.

1. Why insider threats are becoming more expensive, less visible, and harder to dismiss

The old stereotype of the insider threat as a disgruntled employee stealing files on the way out is too narrow to be useful. Modern insider risk is broader: careless employees oversharing data, contractors keeping unnecessary access, developers pushing secrets into the wrong place, credential misuse blending into normal behavior, and staff using GenAI or SaaS tools in ways security teams cannot fully see. Fortinet’s 2025 insider-risk report says 72% lack visibility into how users handle sensitive data, 77% faced insider-driven data loss in the past 18 months, and 45% are very concerned about data sharing with GenAI tools. That is not a small detection problem. It is a visibility crisis.

That visibility crisis gets worse because legitimate access makes malicious activity harder to separate from routine activity. IBM’s breach report found credential-based attacks and malicious insider activity took especially long to identify and contain, with combined lifecycles averaging 292 days for compromised credentials and 287 days for malicious insider cases. The reason is painfully simple: defenders have to distinguish harmful use from authorized use. That is why strong teams pair access control models, privileged access management, intrusion detection system deployment, and cyber threat intelligence analysis instead of relying on one dashboard and hoping alerts explain intent.

Another reason insider risk keeps climbing is that negligence is still the volume driver even when malicious insiders get more headlines. Ponemon’s 2025 findings say 55% of insider incidents in its research were caused by employee negligence, with an average annual cost of $8.8 million to remediate those incidents. That matters because many organizations still build programs as if only sabotage matters. In practice, the faster win often comes from reducing careless sharing, insecure workarounds, poor offboarding, excessive entitlements, and unmanaged data movement. Teams reading future skills for cybersecurity professionals, predicting future cybersecurity audit practices, cybersecurity frameworks like NIST, ISO, and COBIT, and security audits best practices should take that as a warning: maturity is as much about reducing ordinary unsafe behavior as catching extraordinary malice.

| Insider Risk Signal | What It Looks Like in Real Life | Likely Root Cause | Business Exposure | Primary Owner | Best First Control |

|---|---|---|---|---|---|

| Repeated large file downloads | Unusual export before resignation or role change | Overbroad access, weak monitoring | IP loss, client-data exposure | Security + HR | UEBA + entitlement review |

| Sensitive files sent to personal email | User forwards reports “to work from home” | Poor policy enforcement | Data leakage, compliance failure | Security | DLP blocking and coaching |

| GenAI uploads of internal content | Staff paste code or customer data into public tools | Lack of AI-use controls | IP loss, privacy risk | Security + Legal | Approved AI guardrails |

| Dormant privileged accounts still active | Former admins retain access | Weak offboarding | Privilege abuse, ransomware blast radius | IT + IAM | PAM + automated deprovisioning |

| Contractor access beyond project end | Third parties still logging in months later | Ownership gaps | Third-party compromise | Vendor Mgmt + Security | Time-bound access |

| Unusual after-hours approvals | Midnight MFA approvals or admin changes | Compromised or coerced insider | Identity takeover | SOC | Risk-based MFA + alerting |

| Mass cloud-share creation | Sensitive folders widely shared internally | Convenience over governance | Lateral exposure | Cloud Admin + Data Owner | Default-deny sharing rules |

| USB or removable media spikes | Unexpected copying to external storage | Weak endpoint controls | Data exfiltration | Endpoint Team | Device-control policy |

| Source-code cloning surges | Bulk repo cloning by one engineer | No behavioral baseline | IP theft, competitive leakage | AppSec + DevOps | Repo analytics + least privilege |

| Access requests without business need | User asks for “temporary” privileged roles | Approval fatigue | Privilege creep | IAM | JIT access workflow |

| Failed attempts to disable logging | Admin alters audit settings | Malicious intent or concealment | Investigation blind spots | Security Engineering | Immutable logging |

| Shared accounts still in use | Teams log in with one generic admin account | Poor accountability design | No attribution, audit failure | IT Ops | Named admin identities |

| Repeated policy exceptions | Business units bypass controls “for speed” | Weak governance tone | Control erosion | Leadership + GRC | Exception review board |

| Unapproved SaaS adoption | Employees move files into shadow tools | Productivity workaround culture | Unknown data residency risk | Security + IT | CASB/SaaS discovery |

| Frequent HR issue plus access anomalies | Performance action followed by unusual queries | Potential retaliatory behavior | Data theft, sabotage | HR + Security | Joint escalation process |

| Customer records browsed without case need | Snooping into files unrelated to role | Lax oversight | Privacy breach, regulatory penalties | Data Owner + Compliance | Context-aware monitoring |

| Bulk ticket closures hiding alerts | Analyst suppresses or closes too many cases | Burnout or concealment | Missed incidents | SOC Manager | Case-quality review |

| Sudden permission changes in finance systems | User expands access near payout periods | Fraud opportunity seeking | Financial loss | Finance IT + Security | Segregation-of-duties checks |

| Logins from impossible travel patterns | Normal user appears across distant geos | Stolen credentials or token misuse | Account compromise | SOC | Session risk analytics |

| Repeated access to merger or legal folders | Curiosity turns into opportunistic exposure | Need-to-know not enforced | Insider trading, disclosure risk | Legal + Security | Tighter classification controls |

| Endpoint encryption disabled | User turns off safeguards for convenience | Weak hardening enforcement | Lost-device exposure | Endpoint Team | Tamper protection |

| Mass printing of sensitive documents | Paper exports before departure | Digital-only monitoring assumptions | Untracked data theft | Physical Security + IT | Secure print logging |

| Security alerts ignored by managers | Repeated override of risky behavior notices | Weak accountability culture | Repeat insider events | Business Leadership | Escalation SLAs |

| Secrets found in tickets or chats | Passwords or keys pasted into collaboration tools | Unsafe support practices | Credential compromise | DevOps + Support | Secret scanning |

| Admin actions without change records | Critical changes occur off process | Weak change governance | Sabotage or accidental outage | ITSM + Security | Mandatory change linkage |

| High-risk departing employee not reviewed | No focused monitoring during exit period | Poor offboarding prioritization | Last-mile exfiltration | HR + Security | Departure risk checklist |

| Overly broad data lake access | Hundreds can query sensitive datasets | Growth without governance | Mass exposure potential | Data Platform + Security | Role redesign and segmentation |

2. Identification: the signals security teams should monitor before the breach narrative writes itself

Most insider-threat programs are too late because they look for “proof” instead of looking for patterns. Identification should start with behavior shifts, entitlement shifts, and business-context shifts. That means monitoring not only downloads and transfers, but also approvals, privilege changes, sharing activity, risky SaaS use, access outside role norms, attempts to weaken logging, and deviations around resignation, disciplinary action, layoffs, or vendor transitions. CISA’s insider-threat guidance centers on defining, detecting, and identifying threats through a structured program rather than isolated technical controls. Its new 2026 material also emphasizes building a multi-disciplinary insider threat management team, because one team alone rarely sees the full story.

That multi-disciplinary model matters because the best indicators often sit in different systems. Security may see unusual queries. IAM may see permission elevation. HR may know the employee is in conflict or departing. Legal may know a sensitive deal is underway. Operations may see process abuse. If those signals never converge, the organization stays blind until the attacker, regulator, customer, or journalist explains what happened first. IBM’s 2024 report shows attacker-disclosed breaches averaged $5.53 million, versus $4.55 million when security teams identified the breach themselves. That gap is a brutal financial lesson in why early internal identification matters. Teams sharpening incident response execution, DLP tool strategy, network monitoring and security tooling, and endpoint security forecasting should treat insider-risk detection as an evidence-fusion problem, not a single alert problem.

A mature identification program also understands that not every insider event starts as malice. Some begin as convenience. The employee wants to move faster, bypass a slow control, use a personal AI tool, share a dataset for legitimate collaboration, or keep access “just in case.” That is why behavior-aware detection matters more than static policy language. Fortinet’s 2025 report explicitly points to SaaS blind spots and GenAI-related exposure as key drivers of insider-driven data loss. So identification now has to cover modern workflows, not just legacy endpoints and email. Security teams tracking future cloud security trends, AI-powered cyberattack evolution, Zero Trust innovation, and privacy-regulation trends already know the surface area has shifted. Insider-risk monitoring has to shift with it.

3. Prevention: the controls that actually reduce insider risk instead of just documenting it

Prevention starts with access discipline, because you cannot meaningfully reduce insider damage if too many people can still reach too much data too easily. Least privilege, just-in-time access, privileged session controls, strong offboarding, periodic entitlement reviews, contractor expiry, and segmentation remain foundational. This is not glamorous work, but it is where real damage reduction lives. When IBM says malicious insider breaches were the costliest initial attack vector in its 2024 study, that is your cue to reduce the amount of damage a trusted identity can do once something goes wrong.

The second prevention layer is data movement control. You need to know which data matters most, where it lives, who touches it, how it is shared, and what “normal” looks like by team and role. Then enforce accordingly. That is where DLP strategies and tools, encryption standards, public key infrastructure basics, and cloud security tools stop being theoretical and become practical insider-risk controls. Fortinet’s report says many organizations still lack visibility into how users handle sensitive data. If you cannot map movement, you cannot prevent harmful movement.

The third layer is behavioral and cultural prevention. Ponemon’s 2025 research makes this unavoidable: negligence remains the most prevalent insider incident type. That means prevention is not just about catching bad actors; it is about reducing routine unsafe choices before they become breaches. High-performing programs do not merely send annual awareness slides. They pair training with monitoring, targeted coaching, manager accountability, clear AI-use rules, easy-approved alternatives, and consistent exception governance. Mimecast’s 2026 human-risk reporting says only 28% of organizations combine regular awareness training with continuous monitoring, despite broad recognition that protection is incomplete. That gap is exactly where many insider programs fail. Teams investing in security awareness platforms, future cybersecurity standards, cybersecurity legislation for SMBs, and future audit practice changes should read that as a design flaw they can actually fix.

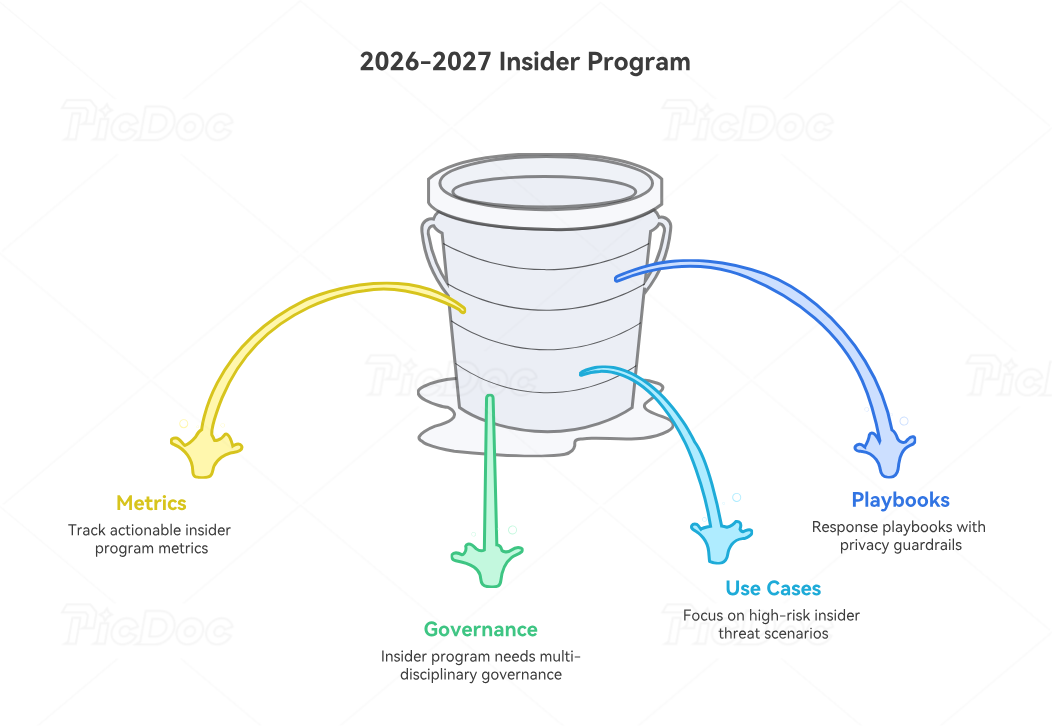

4. The 2026-2027 insider-threat operating model organizations should adopt now

A usable insider-threat program for 2026-2027 needs four layers. First, a clear governance model. Someone must own the program, but it cannot be run as a silo. CISA’s current direction is explicit: insider-threat mitigation works best with a multi-disciplinary team bringing together security, HR, legal, physical security, operations, and other relevant stakeholders. If your program still depends on one analyst and one policy, it is not a program. It is a hope strategy.

Second, the program needs a small number of priority use cases tied to material business risk. Start with departing employees, contractor offboarding, privileged access abuse, large-scale exports, sensitive-record snooping, SaaS oversharing, and GenAI data leakage. Those scenarios map cleanly to real damage. They also let teams wire together telemetry from IAM, endpoints, DLP, cloud platforms, collaboration tools, and HR signals. This is where cloud security engineering pathways, SOC analyst career development, SOC-to-manager advancement, and cybersecurity manager career pathways align with real-world value: insider-risk detection is not just a tool issue, it is an operations design issue.

Third, the program needs metrics that executives can understand. Count not only incidents, but also revoked excessive entitlements, time to disable access for departures, number of high-risk exceptions approved, high-risk data movements blocked, percentage of critical systems under immutable logging, and average time from risky behavior to review. IBM’s data is a reminder that delayed detection and containment inflate cost. So the metrics that matter most are the ones that show friction removed from fast identification and safe intervention.

Fourth, the program needs response playbooks with proportionality and privacy guardrails. Not every anomaly deserves a scorched-earth reaction. The response model should separate coaching cases, control-remediation cases, negligence investigations, compromised-account cases, and deliberate misconduct cases. That distinction matters legally, culturally, and operationally. Done well, it improves trust rather than destroying it.

5. Income, governance, and market implications: why insider-threat capability is becoming a premium cybersecurity skill

Insider-threat work is no longer a niche specialty. It is becoming a premium capability across governance, detection, compliance, cloud security, and advisory roles because boards, auditors, and regulators increasingly expect organizations to understand how trusted access is used. That is one reason professionals building careers toward cybersecurity compliance officer roles, cybersecurity auditor pathways, CISO tracks, and security manager to director progression should understand insider risk deeply: it sits right in the middle of governance credibility and operational resilience.

The market side is also changing. Advisory firms and internal leaders who can translate insider risk into access design, cloud governance, human-risk reduction, and incident-response readiness are more commercially valuable than teams that only produce generic awareness decks after something goes wrong. Ponemon’s cost growth and IBM’s breach-cost findings both strengthen the budget case. When leadership sees that insider-related damage is not abstract, the conversation shifts from “Do we need an insider-risk program?” to “How fast can we make this measurable and defensible?”

That creates a serious opportunity for practitioners who can combine DLP strategy, SIEM maturity, audit evidence discipline, privacy-regulation insight, and future workforce skills into one coherent operating model. The winner in 2026-2027 will not be the organization with the most dashboards. It will be the one that sees risky human behavior early, constrains access intelligently, responds proportionally, and documents decisions well enough to stand up under scrutiny.

6. FAQs

-

They define insider threat too narrowly. If the program only looks for malicious employees stealing files, it misses negligence, credential misuse, shadow SaaS behavior, GenAI leakage, contractor overreach, and role-based overexposure. The strongest programs track harmful use of legitimate access, not just obviously hostile intent.

-

By volume, often yes. Ponemon’s 2025 insider-risk findings say 55% of insider incidents in the study were caused by employee negligence. That does not mean malicious insiders are unimportant. It means organizations that ignore careless behavior are leaving the largest volume driver untreated.

-

Start with the highest-consequence use cases: excessive privileged access, departing employees, contractor offboarding, unusual bulk exports, sensitive-record snooping, shadow SaaS use, and GenAI uploads involving sensitive data. That gives you meaningful risk coverage before you attempt a giant all-at-once monitoring rollout.

-

Because the activity often uses legitimate credentials and familiar workflows. IBM’s 2024 breach research found long identification and containment times for credential-based and malicious insider cases, which reflects how difficult it is to separate valid-looking activity from harmful intent.

-

At minimum: security, IAM, HR, legal, operations, and the relevant business/data owners. CISA’s current guidance stresses a multi-disciplinary insider-threat management model because the full risk picture rarely lives in one system or one department.

-

It has tightly governed access, role-aware monitoring, SaaS and GenAI visibility, strong offboarding, behavior-based detection, clear escalation thresholds, privacy guardrails, and executive metrics tied to business impact. Mature programs do not just log events. They reduce exposure, shorten detection time, and prove that risky access is being actively controlled.